Service mesh and serverless deployment models represent the next phase in the evolution of microservice architectures. Service mesh enables developers to focus on business feature development rather than managing non-functional microservices capabilities such as monitoring, tracing, fault tolerance, and service discovery.

Open source service mesh projects, including Istio, LinkerD, and Kuma, use a sidecar, a dedicated infrastructure layer built right into an app, to implement service mesh functionalities. So, for example, developers can improve monitoring and tracing of cloud-native microservices on a distributed networking system using Jaeger to build an Istio service mesh.

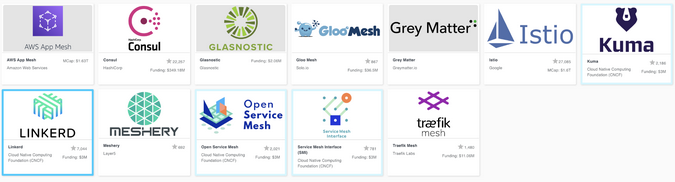

CNCF Service Mesh Landscape (Source: CNCF)

In this next phase of implementing service mesh in microservices, developers can advance their serverless development using an event-driven execution pattern. It's not just a brand-new method; it also tries to modernize business processes from 24x7x365 uptime to on-demand scaling. Developers can leverage the traits and benefits of serverless deployment by using one of the open source serverless projects shown below. For example, Knative is a faster, easier way to develop serverless applications on Kubernetes platforms.

CNCF Serverless Landscape (Source: CNCF)

Imagine combining service mesh and serverless for more advanced cloud-native microservices development and deployment. This combined architecture allows you to configure additional networking settings, such as custom domains, mutual Transport Layer Security (mTLS) certificates, and JSON Web Token authentication.

Here is a quick example of setting up service mesh on Istio with serverless on Knative Serving.

1. Add Istio with sidecar injection

When you install the Istio service mesh, you need to set the autoInject: enabled configuration for automatic sidecar injection:

global:

proxy:

autoInject: enabledIf you'd like to learn more, consult Knative's documentation about installing Istio without and with sidecar injection.

2. Enable a sidecar for mTLS networking

To use mTLS network communication between a knative-serving namespace and another namespace where you want the application pod to be running, enable a sidecar injection:

$ kubectl label namespace knative-serving istio-injection=enabledYou also need to configure PeerAuthentication in the knative-serving namespace:

cat <<EOF | kubectl apply -f -

apiVersion: "security.istio.io/v1beta1"

kind: "PeerAuthentication"

metadata:

name: "default"

namespace: "knative-serving"

spec:

mtls:

mode: PERMISSIVE

EOFIf you've installed a local gateway for Istio service mesh and Knative, the default cluster gateway name will be knative-local-gateway for the Knative service and application deployment.

3. Deploy an application for a Knative service

Create a Knative service resource YAML file (e.g., myservice.yml) to enable sidecar injection for a Knative service.

Add the sidecar.istio.io/inject="true" annotation to the service resource:

apiVersion: serving.knative.dev/v1

kind: Service

metadata:

name: hello-example-1

spec:

template:

metadata:

annotations:

sidecar.istio.io/inject: "true" (1)

sidecar.istio.io/rewriteAppHTTPProbers: "true" (2)

spec:

containers:

- image: docker.io/sample_application (3)

name: containerIn the code above:

(1) Adds the sidecar injection annotation.

(2) Enables JSON Web Token (JWT) authentication.

(3) Replace the application image with yours in an external container registry (e.g., DockerHub, Quay.io).

Apply the Knative service resource above:

$ kubectl apply -f myservice.ymlNote: Be sure to log into the right namespace in the Kubernetes cluster to deploy the sample application.

Conclusion

This article explained the benefits of service mesh and serverless deployment for the advanced cloud-native microservices architecture. You can evolve existing microservices to service mesh or serverless step-by-step, or you can combine them to handle more advanced application implementation with complex networking settings on Kubernetes. However, this combined architecture is still in an early stage due to the architecture's complexity and lack of use cases.

Comments are closed.